You should test this new network against the older network to see if there are any improvements. The validation file should be set to the new validation data, not the old data.Īfter training is finished, your new net should be located in the "final" folder under the "evalsave" directory. However, chess programs using traditional MCTS were much weaker than alpha-beta search programs, (4, 24) while alpha-beta programs based on neural networks have previously been unable to compete with faster, handcrafted evaluation functions. You should also set eval_save_interval to a number that is lower than the amount of positions in your training data, perhaps also 1/10 of the original value.

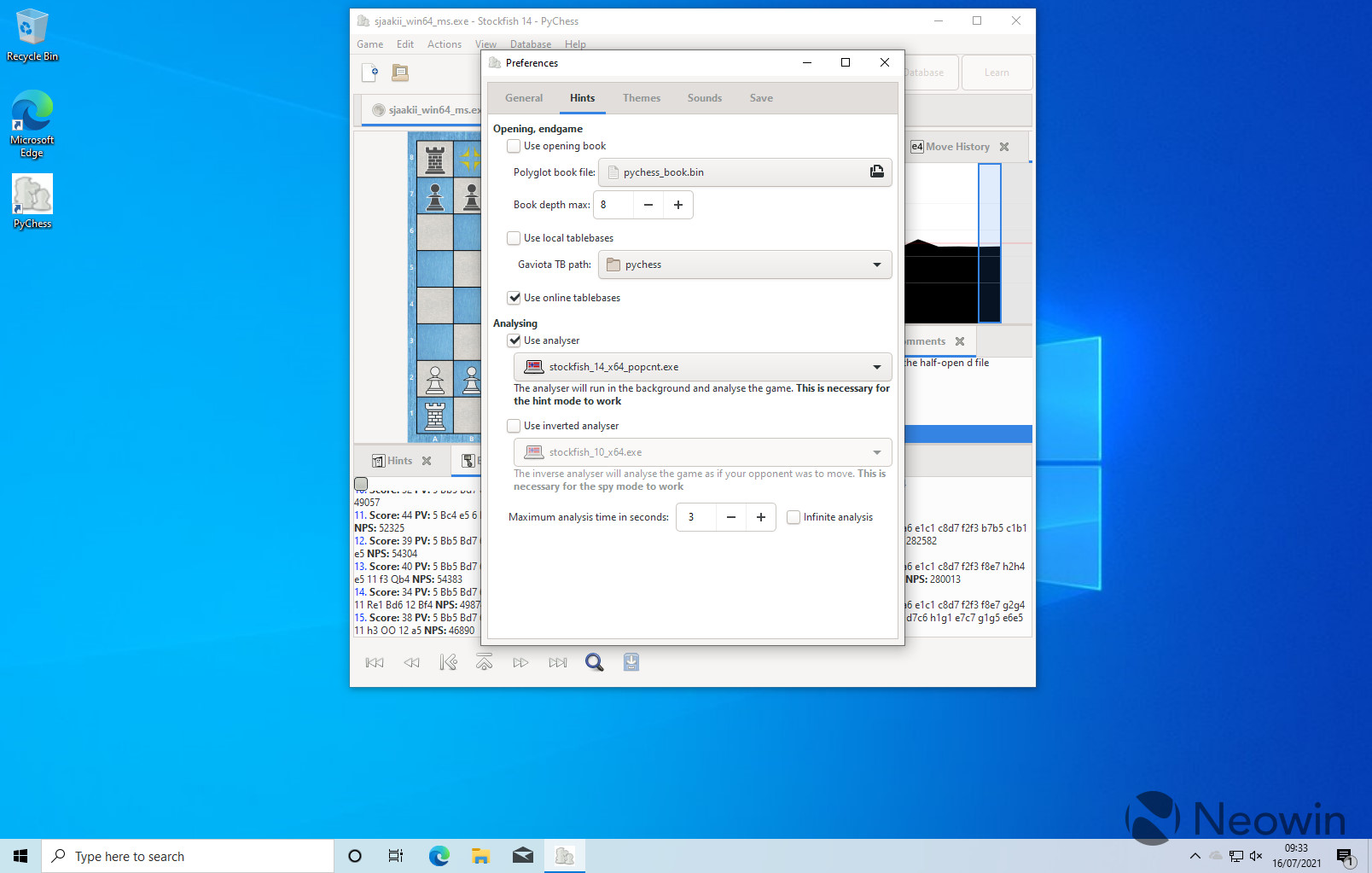

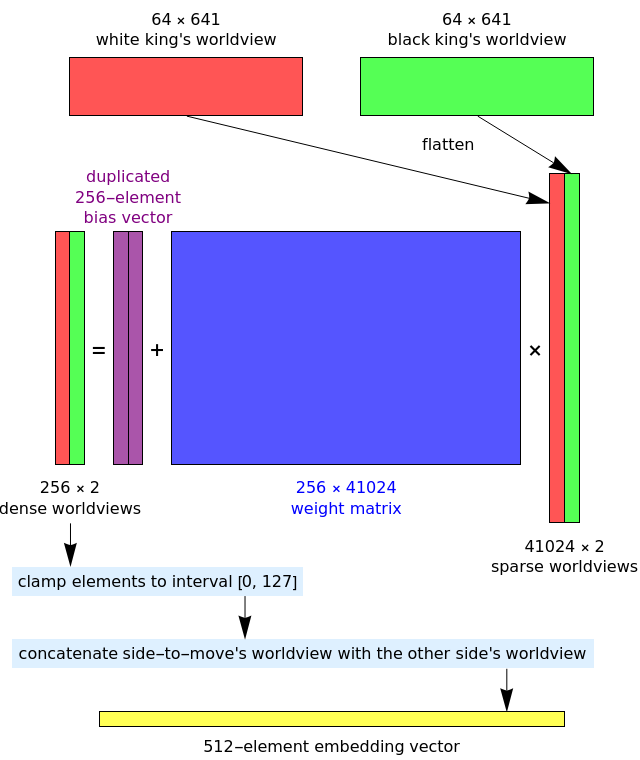

Do NOT set SkipLoadingEval to true, it must be false or you will get a completely new network, instead of a network trained with reinforcement learning. Stockfish, which was the best chess program and took more than a decade for human experts to develop. Then, using the same binary, type in the training commands shown above. Do the same for the validation data and name it to val-1.bin to make it less confusing. You should also do the same for validation data, with the depth being higher than the last run.Īfter you have generated the training data, you must move it into your training data folder and delete the older data so that the binary does not accidentally train on the same data again. You should aim to generate less positions than the first run, around 1/10 of the number of positions generated in the first run. The nodchip repository provides additional tools to train and develop the NNUE networks. It can be evaluated efficiently on CPUs, and exploits the fact that only parts of the neural network need to be updated after a typical chess move. Make sure SkipLoadingEval is set to false so that the data generated is using the neural net's eval by typing the command uci setoption name SkipLoadingEval value false before typing the isready command. The NNUE evaluation was first introduced in shogi, and ported to Stockfish afterward. Make sure that your previously trained network is in the eval folder.

If you would like to do some reinforcement learning on your original network, you must first generate training data using the learn binaries.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed